How does medical misinformation hurt you?

Today, I’d like to start with a question for the non-clinicians reading this: Where do you get your health information?

For many people, the answer is TV news and social media. These sources can be a wonderful source of accessible information, allowing us to stay informed on current events from all around the world. But they can also dangerously propagate health misinformation.

Earlier this month, YouTube signaled that it’s taking the issue of medical misinformation more seriously. The company’s health division revealed a new plan to more heavily moderate content that deals with health information, with a particular focus on cancer treatment and prevention. In my view, this is a welcome (if overdue) move from the video-sharing giant that signals acknowledgment of how dangerous health information can really be.

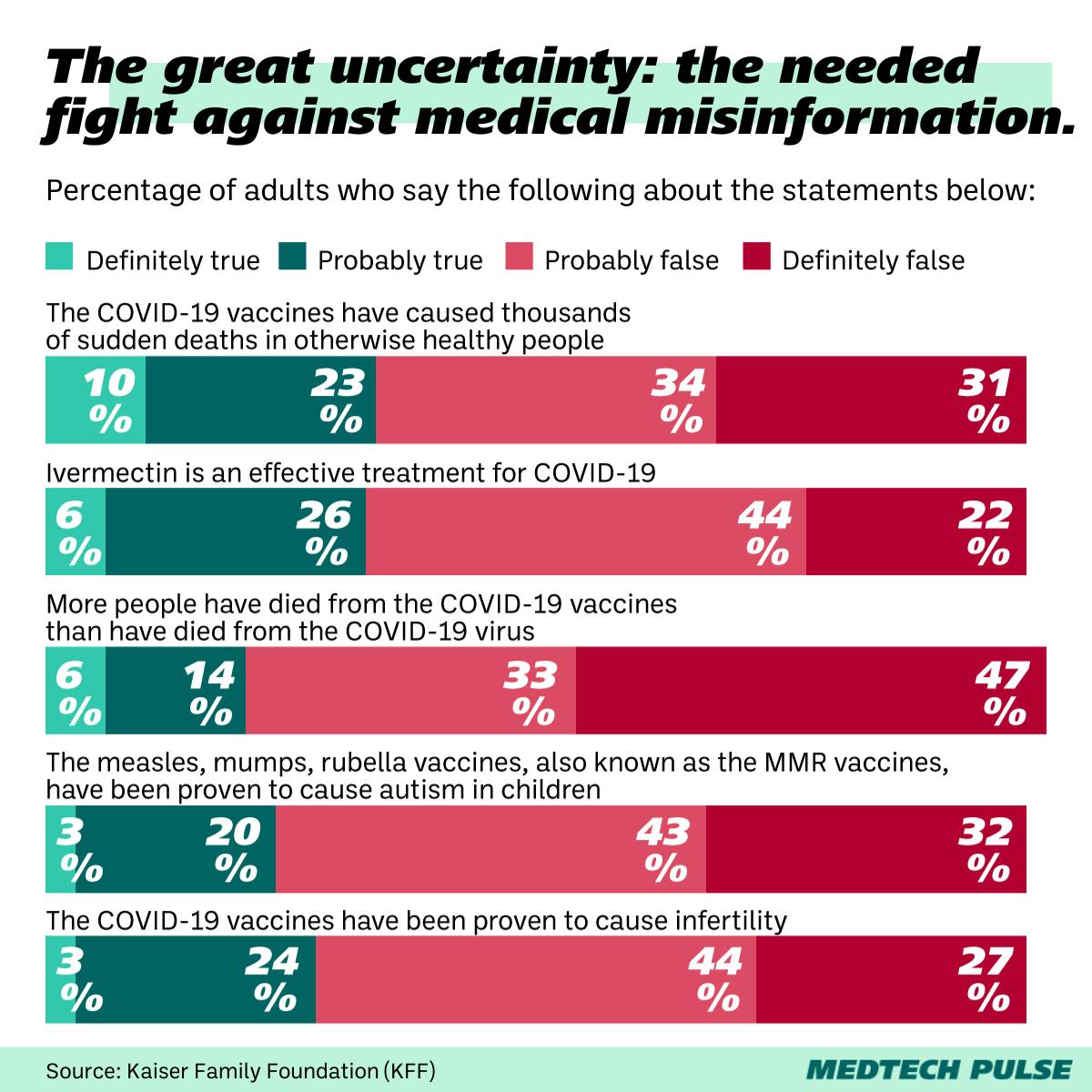

Why is online medical misinformation such a big deal? Here’s a number that I think illustrates it well: Around 3 in 10 Americans still believe ivermectin treats COVID-19. If you’re a frequent reader of MedTech Pulse, I hope this isn’t the first time you’re hearing that you shouldn’t be taking the antiparasitic drug for the respiratory virus. But if you had ventured into the COVID-skeptic corners of social media in 2021, you might have been convinced otherwise.

Now, a survey confirms what many of us have suspected: Social media use seems to make people’s recognition of misinformation worse. Weekly social media users are more likely to have heard of medical misinformation and say it’s probably or definitely true.

This trend can have awful consequences. On the individual level, we're looking at people opting for ineffective approaches to medical treatment. For instance, using certain unstudied herbs to treat cancer instead of traditional oncology. Those choices can have disastrous health effects for the individual. And on the population level, the dissemination of medical misinformation erodes trust in science and healthcare providers, which weakens public health measures (i.e., why we see so much vaccine hesitancy).

Our industry has an important role to play in medical misinformation—taking responsibility for it and stopping it. This is especially true when it comes to the growing use of generative AI in health contexts. I applaud growing efforts around finding and fixing generative AI’s propensity for displaying bias and incorrect health information. I was interested to see the U.S. government’s approach to guerrilla generative AI workshopping, where attendees of a hacking conference tried to “break” chatbots into revealing the triggers for bias and misinformation. This is a perfect example of how sometimes, the best way to improve a technology is to learn from mistakes and distribute findings. But it’s best if skilled users—as opposed to vulnerable patients—are the ones doing the breaking.

When it comes to medical misinformation, I believe we are coming up against a false sense of security. As you’ll see in our articles this week on the sleep crisis and tuberculosis testing, this false sense of security often doesn’t get questioned until something terrible happens. We can’t afford to wait around and underestimate public health issues like this.

And to that end, I’ll leave you today with this: Just because you think you know much better than to do something like using ivermectin for COVID, that doesn’t mean you are unaffected by health misinformation. It functions as a specter, damaging the trust in our overall health system and making our world less healthy as we continue to let it fester. With our innovative spirit, I think it’s time we dragged health misinformation out in the open and eliminated it, with the creative solutions I’m so proud of our industry for. We can’t let medical misinformation keep undermining the healthy future we all deserve.