GPT-4 meets medicine

Ever since ChatGPT opened to the public, the news about it hasn't stopped. 5 months out now and ChatGPT Plus subscribers now have access to the already updated model (GPT-4). The pace of OpenAI’s progress is impressive. But it’s not only this developer driving the rapid race. The platform counted 100 million users two months after launch, setting a record for the fastest-growing user base.

And as we know, the potential impact of AI technology on healthcare is immense. Scientists have challenged the usage of ChatGPT—and were impressed by the results. A recent headline discussed how the “newest version of ChatGPT passed the US medical licensing exam with flying colors.” The chatbot even diagnosed a rare condition.

Both users and businesses are getting used to it quickly to the extent that ChatGPT appears to already have a fixed spot in the medtech tool case. An adoption par excellence? Of course, no new technology or tool implementation comes without obstacles.

A fact the researchers behind the above medical licensing exam study pointed out: GPT-4 isn't always right and it follows no ethical guidelines. It makes mistakes that would come at patients' costs—but, unlike human physicians, it didn’t take the Hippocratic oath. In this Edition’s lead article, we dive into the topic of large improvements we can gain in healthcare by implementing AI technology (e.g. medical scribes). However, the standard of accuracy often seems to be not regulated enough when it comes to what’s best for the patient. So the question is—how far can this technology be implemented ethically while still being a reliable tool for health care? How can regulation help? Will regulations deleteriously slow down the pace of medical AI innovation?

There is movement in this discussion. The call for regulations is getting louder. Italy became the first country in Europe to ban ChatGPT. The European Parliament is debating stricter regulations of the popular artificial intelligence. It is also important to mention that the need for regulations in digital health innovation not only concerns ChatGPT; it comes with the introduction of Health 4.0. Medical device developers must now include cybersecurity plans in their applications or submissions for regulatory review of cyber devices in the U.S.—to set higher standards for cybersecurity.

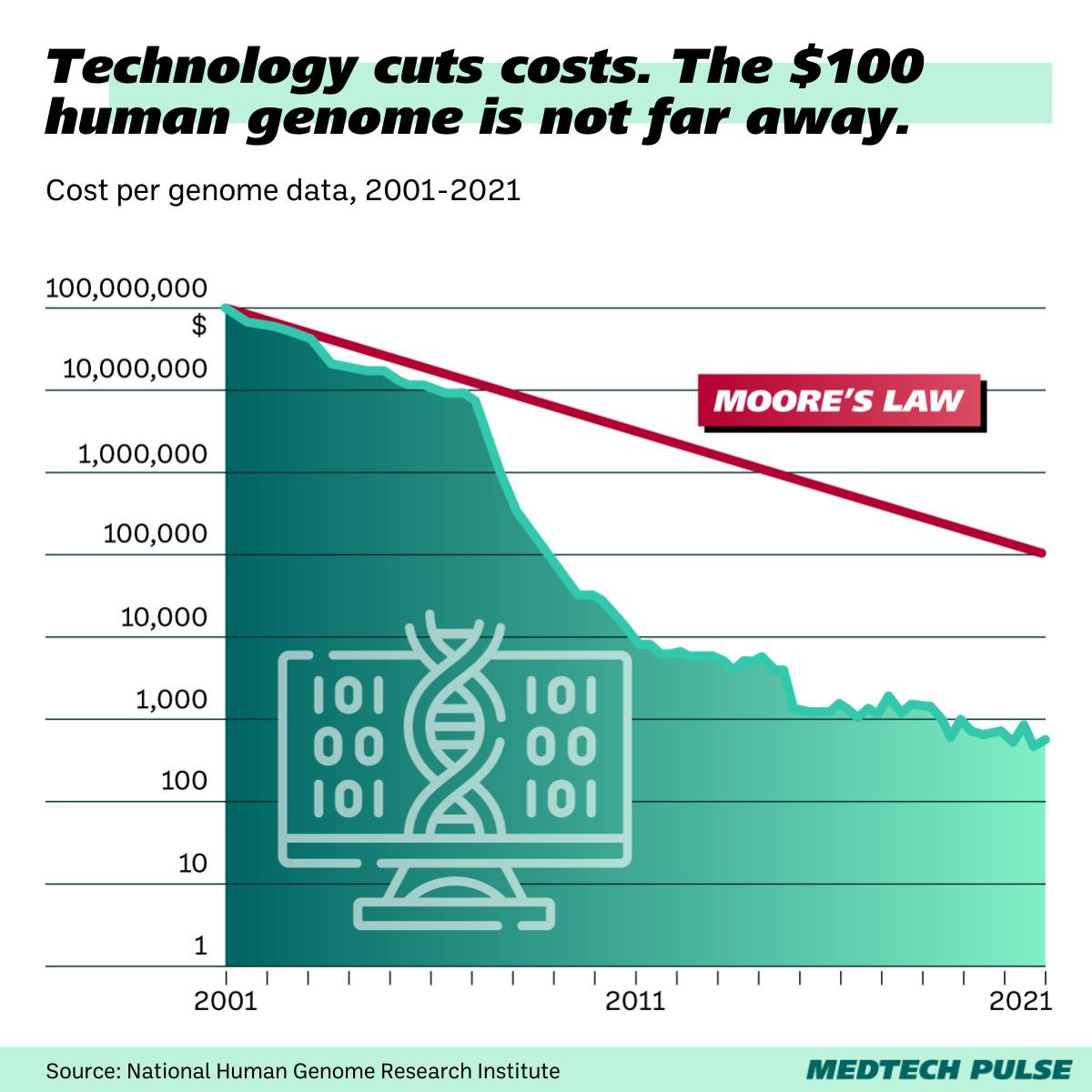

It shows the dropping costs for studying a human genome in the field of advanced diagnostics and analytics. Healthcare providers are nowadays in a position to offer whole-genome sequencing to large patient populations at an affordable cost. That means more data can be collected, more validated research is possible, but also the volume of data processed is constantly increasing. Developments in medical diagnostics show the promise of changing how diseases are detected, allowing them to be identified in early stages before developing into serious health threats. This trend is especially promising for cancer research and prevention. With growing availability to the broad society, the possibility of providing a customized, unique treatment and prevention protocol for each individual does not seem too far away. Of course, this approach to treatment requires access to and interaction with the most personal health information the human individual offers. And so, it demands a high standard of data protection and accuracy.

Let’s support the power of advanced diagnostics to push the shift of healthcare from treatment to prevention, from disease to health. At the same time, let’s acknowledge that the call for greater regulation of digital health technology is right. Let’s be there to protect users’ data and support that shift, to ensure the new tools we’re excited about are trustworthy.